Subscribe if you want to be notified of new blog posts. You will receive an email confirming your subscription.

Medicare Health Support: 8 Takeaways on Building Better Bridges

by Thomas Wilson, PhD, DrPH and Vince Kuraitis

What’s the right metaphor for Medicare Health Support (MHS), CMS’ major experiment with disease management for Medicare beneficiaries? We prefer to look it as a bridge failure that presents an opportunity to improve future engineering and design.

We’ve now had the time to read, reread, and reread again the very recent report from Research Triangle Institute (RTI) — Evaluation of Phase I of the Medicare Health Support Pilot Program Under Traditional Fee-for-Service Medicare: 18-Month Interim Analysis . Here’s a listing of our 8 key takeaway points:

- There’s Sufficient Evidence to Conclude "MHS Didn’t Work As Expected"

- Some Quality Measures in MHS Improved, Yet Outcomes Didn’t. Why?

- MHS Suffered Execution Nightmares

- Ronald Reagan Was Right — “Trust, But Verify”

- MHS Has Implications for the Medicare Medical Home Demo (MMHD)

- Be Wary of Claims from Pre-Post Studies

- Differences Between Medicare and Commercial DM are Dramatic

- The Guaranteed Savings Model is a Two Edged Sword

Let’s examine these at these one at a time.

Takeaway #1: There’s Sufficient Evidence to Conclude "MHS Didn’t Work As Expected"

In a blog post last January, we criticized CMS and argued that there was Insufficient Evidence to End Medicare Health Support .

OK, now there’s sufficient evidence to end MHS. More than sufficient evidence. We don’t want to beat a dead horse with the details, so we’ll simply point you to the executive summary of the RTI report or to Dr. Jaan Sidorov’s concise recap .

And even though the RTI study technically marks the halfway point for reporting on MHS, for all practical purposes it’s over and we need to ask “what can we learn from this bridge failure”.

Please don’t mistake our point — we’re not letting CMS off the hook for holding back information from the public…we’re simply saying that NOW we have enough evidence to agree that a Phase II MHS is not justified.

We do commend RTI for producing an illuminating report. Some of the things we found particularly helpful:

- The RTI report lists names of specific DM companies in text and tables.

- Several industry commentators (us included) had suggested potential additional analyses to identify possible subgroups in which DM was having positive effects. This report just does that. For example, in some analyses the sample is stratified by 5 different groups: (1) heart failure (HF)-only, (2) diabetes-only, (3) HF with or without diabetes, (4) diabetes with or without HF, and (5) HF and diabetes. While this stratification did not provide evidence that DM was effective in any subgroups, it comes close to leaving no stone unturned.

- The report provides many enlightening, qualitative details about MHS. For examples, it explains why three companies requested early termination; it describes diverse and creative approaches toward patient and doctor outreach efforts; it incorporates Medicare Health Support Organization (MHSO) feedback on program design. The commentary helps understand the challenges that the MHSOs experienced.

Takeaway #2: Some Quality Measures in MHS Improved, Yet Outcomes Didn’t. Why?

We were struck with the reported findings that 40% of the quality metrics improved. Yet, there was no significant difference in the most important outcomes – financial and hospitalization utilization — between the reference group the group selected for the intervention.

We’ve seen things like this before in clinical studies: Process metrics (i.e. screenings rates of HbA1c) improved, but outcomes metrics (i.e. level of HbA1c) did not improve. The fact that HbA1c levels did not improve does not mean the community health providers are failures.

Similarly in the MHS project, the DM companies obviously targeted quality measures, and the fact that at least some of them improved is good news.

So what happened? Simply this: the hypothesized pathway between quality improvement and quality outcomes ain’t necessarily so . Most importantly: a hypothesis that does come true should NEVER be considered a failure

"I have not failed. I’ve just found 10,000 ways that won’t work." Thomas Edison

We need to keep trying, but we must change the way we try. We all know the definition of insanity: Doing the same thing over and over again and expecting different results.

The DM companies have already changed, but what else needs to change? We need to dig into the data and learn more about the pathway between quality and outcomes, and we need a lot of eyeballs looking at this, as Sandra Foote argued in her most recent Health Affairs article.

Takeaway #3: MHS Suffered Execution Nightmares

We come away with a few overall impressions of just how mismatched levels of effort were on this project.

First, the MHSOs put in a tremendous amount of effort creating back office and information systems to gather basic data to run their programs. While Medicare had promised routine data updates about patients, the MHSOs found that the data was not specific and timely — it wasn’t useful to modify care plans for individual patients.

Here’s just one example. One critical piece of information the MHSOs wanted was immediate notification when patients were admitted to a hospital. The information they received from CMS was one to two months old — too late to be useful; they also found that patients and families did not notify them directly when a beneficiary was hospitalized.

What to do? The MHSOs attempted numerous workarounds, with varying degrees of success. One tried to link its IT system with a hospital’s IT system; a second MHSO attempted and abandoned efforts to obtain discharge information directly from hospitals; several MHSOs established data sharing agreements with claims processing companies. The approaches were diverse and creative, yet only foundational to the major thrust of the program — direct support of patients.

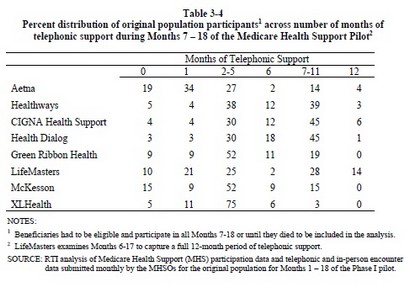

Our second impression is that this high level of back-end infrastructure creation was a mismatch with the surprisingly low level of patient contacts. RTI reported that in months 7–18 of the project “the majority of fully eligible and participating beneficiaries received between 2 and 5 months of telephonic support” (defined as at least one phone call in a given month).

The MHSOs should explain what appears to be embarrassingly low levels of patient contact shown in the following table:

Here’s an example of how to interpret the data in the table. For Aetna, 19% of patients received no telephone contact; 27% of patients received between 2–5 months of telephone support (1 or more calls), and only 4% of patients were contacted in all 12 months.

Takeaway #4: Ronald Reagan Was Right — “Trust, But Verify”

We believe in a limited view of transparency. There is a legitimate use of opacity, secrecy and protected intellectual property in the business of health care. But the methods used to make claims for products and services in health care — as well as the data (input) and results (output) — must be transparent and replicable.

So what does the RTI report teach us about transparency and replicability for the future of chronic disease management and evaluation? Here is our list, in descending priority order:

1) There as a noted lack of timely information on hospitalizations according to one vendor (p. 23). In commercial populations, this is often available in the "prior authorization" data. Could this have been part of the failure of the MHS?

2) One vendor asked for detailed claims data on reference, but was not pleased with the data set CMS provided (pp. 24-5) — it excluded date of service. We find this unacceptable and counter-intuitive. Future projects should make all data available on the reference group, with appropriate safeguards.

3) In the end, this is a "comparative effectiveness" exercise comparing DM to standard of care interventions. Thus, the statistical comparisons between intervention and reference must be considered of the utmost importance. But were they?:

- RTI stated they did "t-tests" of comparison of metrics, but did not show results, why not? In the old days, before the Internet, there was not enough space in standard publications. We don’t have that excuse — we would like to see ALL detailed data results and calculations, with variance and other statistics listed (like kurtosis and skewness).

- They also used "ANCOVA" (analysis of covariance) as a statistical tool to "control" for confounders. Sounds great, but so did credit fault swaps until a few months ago – what you don’t know can hurt you. This is a statistical tool that "assumes that the residuals are normally distributed and homoscedastic" Were they?

As our elementary math teachers demanded of both of us: Show your work! And as our graduate instructors in statistics said: State your assumptions! We want to see all work, all assumptions, the extent to which assumptions are met, and the strengths and weaknesses of the work. We don’t want a repeat of the financial meltdown in the health care industry due to opacity and lack of understanding of the basics of statistics.

- RTI stated that "actuarial adjustments" were made due to the non-equivalence of the reference to the selected intervention population, but we did not see any detail as to how that was done. We had made the point in an earlier blog post that any adjustments to what appeared to be a significant non-equivalence problems at baseline must be transparent. The "actuarial adjustment" may have been something exclusive to CMS (p. 60, footnote 23), but that is not clear.

4) Finally, we see no ability to replicate these finding by either vendors (p. 25) or qualified third parties. As this is publicly financed, there should be provisions from day one to make this data available – in as detailed fashion as possible – to all qualified third parties.

In the end, knowledge is based on transparency of methods and data and the ability to replicate findings. Remember the 16th century slogan from Sir Isaac Newton’s Royal Society of London:

Nullius in Verba "don’t take anyone’s word for it."

Takeaway #5: MHS Has Implications for the Medicare Medical Home Demo (MMHD)

Based on the experience of MHS, we can begin to anticipate how comparable processes will need to be carried out by physicians in the upcoming MMHD.

Patient enrollment should be easier for physicians in MMHD.

To enroll a patient in MHS, the MHSO needed to:

-

Get patient name and contact information from CMS. A substantial amount of this information was incomplete or incorrect.

-

Contact a person by phone. Many callers took multiple phone calls. Many callers were suspicious or not interested.

-

Explain program details. The DM program staff have had no prior contact with beneficiaries and will not have pre-established trust and positive relationships.

-

Get the patient’s explicit consent to participate in a program, then tell them “we’ll be back to you when the program starts in a few weeks.”

-

Recontact the patient at program start, and often refresh patient memories about the program, the DM company, expectations, etc.

To enroll a patient in MMHD, a physician (during a routine office visit) will need to:

- Explain the program briefly to the patient and recommend that the patient participates

- Hand a form to the patient, who in almost all circumstances will dutifully sign.

- Done.

George Bennett, CEO of Health Dialog, explained at the 2007 DMAA Conference that this enrollment process consumed about 25-30% of their total program costs. That’s startling…and physicians will not need to incur this level of expense or effort.

Data gathering could be even more challenging in MMHD.

A major complaint of the MHSOs was that CMS did not deliver timely and specific information as promised.

CMS’ “answer” to this problem is to promise NOTHING for the MMHD contractors. From the December 2008 MMHD Design Clarification FAQ :

Q. Can CMS provide interim data to participating Medical Home practices regarding their own practice’s experience under the Demonstration?

A. CMS is unable to provide data in a timely manner that would be useful for practices to monitor their performance under the Demonstration.

Infrastructure development will be more challenging for physicians in the MMHD. Read the RTI report and experience the headaches from the MHSO point of view — and these were large companies with experienced managers and pre-existing DM programs. Physicians in MMHD will not have comparable resources, management talent, or existing DM programs.

Patient contact should be easier for physicians in the MMHD. Unfortunately, much of this is because the MHSOs simply had such little contact with patients (see above). In the MMHD, patients will have a preexisting, trusting relationship with their physicians. Many patients, perhaps most, will visit physicians for routine care. Physicians’ main challenge will be developing systems to contact patients in-between visits.

Our conclusion here: expect significant execution challenges in MMHD.

Takeaway #6: Be Wary of Claims from Pre-Post Studies

In the most recent Health Affairs (which was accepted for publication prior to the most recent RTI report), Bott et al stated "Up to now, the CMS, like other payers, has accepted clinical rationales and the promise of cost savings to undertake DM demonstrations."

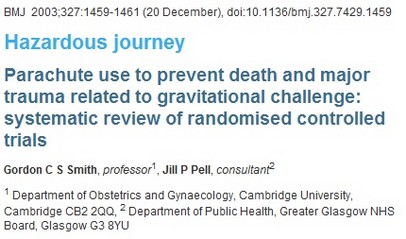

The cost savings promises for this trial were from results based on pre-intervention / post-intervention methodologies. These can be seriously flawed unless the question –"what happens in the absence of DM?" – had been answered definitely (e.g. the value of a parachute when jumping from an airplane is without question).

As was noted by RTI (p. 60, note 22) and by Mathematica researchers reporting on another CMS trial (the Medicare Care Coordination Demo) people with chronic diseases — with or without DM — show changes* in outcomes that were very similar in both the reference and DM groups! Without an equivalent reference group these"changes" can be mistakenly attributed to the intervention (and often are).

Since "past is not [necessarily] prologue** ” we strongly urge public and private sector buyers of DM and other population health programs to be extremely cautious in basing their funding decisions in chronic disease population management programs on results from pre-post studies alone.

* Note: These changes can be up or down, when down they are often called "regression-to-the-mean," but changes can be up as well as was seen in a Medicare P4P trial )

** From Aristotle and friends: There a famous logical fallacy called "Post hoc ergo propter hoc" meaning, "after this, because of this." It’s all Greek to us (even though it is Latin). Be careful….

Takeaway #7: Differences Between Medicare and Commercial DM are Dramatic

For now, we will just note the point: providing disease management services to Medicare patients is a totally different game than serving commercial (under age 65) insurance patients. The details and implications are complex, so we’ll save this for a future posting.

Takeaway #8 : The Guaranteed Savings Model is a Two Edged Sword

What did the MHS project cost taxpayers? There are two different points of view here, and we will start by providing some background.

MHS was modeled after the “guaranteed savings” approach prevalent in commercial DM in 2002. When the RFP for MHS was released early in 2004, it read "come one, come all" and encouraged any interested parties to submit proposals.

The initial level of interest was high and diverse. Over 500 people attended the initial bidders conference in person and another 200 listened on the phone. The interested group represented virtually every sector of health care — including doctors and hospitals.

The guaranteed-savings model of MHS is familiar only to DM companies and health plans. In essence, contractors are at risk at forfeiting up to 100% of their fees if they did not hit satisfaction, outcome, AND financial targets.

Once they understood the risks of this reimbursement model, providers turned the other direction — many decided not to submit proposals.

Ultimately all the awardees of MHS contracts were either health plans or DM companies.

As it turns out, it looks like MHS contractors will indeed be paying back almost all the "fees" they received up front and guaranteed to CMS. While the tab is not yet totaled, the amount will be several hundred million dollars of repayments to CMS.

So what’s the “cost” to taxpayers?

POV #1: Almost nothing. The “successful” MHSO vendors took the business risk (and lost). The only taxpayer expense is CMS’ administrative costs.

POV #2 (our opinion): billions of dollars in opportunity costs. MHS tested a very narrow model of DM with a narrow group of contractors. Other potential models of DM — especially ones driven by providers — were not selected.

We conclude that the real loss to taxpayers is the stifling of innovation in limiting the awardees of MHS to only large DM companies and health plans. Now, five years later, we’ve learned a lot a lot about what approaches for DM don’t work in Medicare, but little about what will work.

It’s time to move on to the next big thing — the Medicare Medical Home Demonstration. Check back in 2014.

.

To conclude where we started, what’s the right metaphor for MHS?

While the MHS bridge failed, we have gained many lessons in bridge building.

This work is licensed under a Creative Commons Attribution-Share Alike 3.0 Unported License. Feel free to republish this post with attribution.

very insightful and well written. my ‘plain old english’ take on this is that when one starts with chaos or lack of management, something as simple as telephonic support can probably add some value. However, it seems that evidence from many quarters suggests that this tool has limits in terms of moving patients to a healthier set of behaviors and thus to a lower cost spot on the health cost continuum.

The tools required to bring us to the next level are more complex, more costly and harder to scale (at least at present). They involve components that serve to educate patients about their illness in real time and in the teachable moment, to derive real-time, true illness-relevant data from those patients and use that data as a substrate for coaching, again at the very moment when coaching makes a difference. Our work at the Center for Connected Health is testament to how valuable this sort of approach can be, but also how challenging it is to scale.

Medicare patients are indeed different than commercial patients, but as you say we need to learn from this grand experiment and move forward because if we don’t find ways to improve the quality of care for this population while controlling costs we’ll continue to see an unacceptable financial drain on our country.

There is hope. some of the ‘Care Management for High Cost Beneficiary’ demonstration projects are showing they can achieve the financial targets set by CMS while improving the care of the beneficiaries covered. Our success in doing so at MGH has been through the implementation of a model that looks a whole lot like the medical home.

So perhaps the answer is a mix of motivated providers, incented the right way, with the right patient facing technologies and support staff.

I quite uncharacteristically declined requests to work on this for CMS because according to my math it was simply not possible to save 5% net of fees. (And to be honest they also don’t pay well.)

Saving 5% net requires roughly 10% of gross savings. Since hospital cost is about 50% of total expense, that’s 20% savings in hospital costs, since nothing else is avoidable through DM and other costs often rise a little.

Since even optimistically only about half of all admits are even possibly avoidable through DM, that means you’d have to avoid 40% of all avoidable hospitalizations overall, no easy feat when you only have conversations with about half of all members. That would mean 80% avoidance among those engaged members.

Since many avoidable hospitalizations follow closely on the heels of previous hospitalizations, one would have to save more than 100% of all avoidable hospitalizations occuring in the engaged population following the date when the data had finally been received by the vendor that indeed the engaged member had had a hospitalization.

And while I know your co-author disagrees with me on whether it is possible to save more than 100%, I maintain that it isn’t.

Having said that I was as surprised as anyone that the programs didn’t cover their costs and I think this very thoughtful posting suggests many good explanations for that.

The way to measure outcomes — the way the plurality of payors now measure — is to look at trends in event rates. Totally transparent. And yet with all the analyses done by CMS and suggested on this posting, no one suggested simply seeing if the adverse event rates for the study population improved relative to the control group.

Vince! Great job. No need for a long winded reply here. The table you provided says it all. The lack of contact with members seems to be one of the biggest challenges. Also in response to point #8 I am willing to bet that some of the DM companies actually wanted risk to keep other players out of the trials. One last point to Al’s response – yup decrease in events would work, but it would have to match to the connectivity from the table in VK’s post. If you did not contact the member you should not take credit for it.

I would agree with Arthur on his last point in the MACRO sense. If you only have 50 conversations and you are claiming that events fell by 51, well, that’s very fuzzy. However, you can’t really tie the exact individuals who did NOT have an event to the ones you called. Plenty of people you call would not have had events anyway.

But another way I read your point is, if you could somehow take out the people who were called, and look at everyone else, and the event rate fell, you certainly couldn’t claim credit for that